Call it paranoia or keen insight, but humans have long pondered the possibility that the end of the world won't come as the result of warring gods or cosmic mishap, but due to our own self-destructive tendencies. Once nomads in the primordial wilds, we've climbed a ladder of technology, taken on the mantle of civilization and declared ourselves masters of the planet. But how long can we lord over our domain without destroying ourselves? After all, if we learned nothing else from "2001: A Space Odyssey," it's that if you give a monkey a bone, it inevitably will beat another monkey to death with it.

Genetically fused to our savage past, we've cut a blood-drenched trail through the centuries. We've destroyed civilizations, waged war and scarred the face of the planet with our progress -- and our weapons have grown more powerful. Following the first successful test of a nuclear weapon on July 16, 1945, Manhattan Project director J. Robert Oppenheimer brooded on the dire implications. Later, he famously invoked a quote from the Bhagavad Gita: "Now I am become death, the destroyer of worlds."

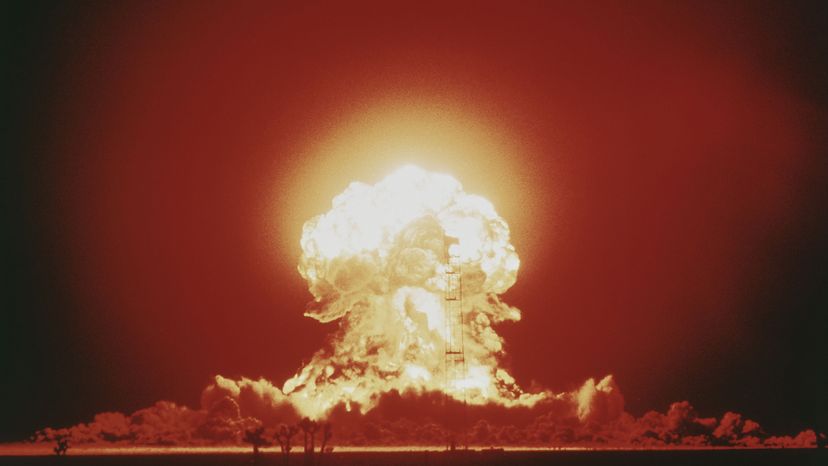

In the decades following that detonation, humanity quaked with fear at atomic weaponry. As the global nuclear arsenal swelled, so, too, did our dread of the breed of war we might unleash with it. As scientists researched the possible ramifications of such a conflict, a new term entered the public vernacular: nuclear winter. If the sight of a mushroom cloud burning above the horizon suggests that the world might end with a bang, then nuclear winter presents the notion that post-World War III humanity might very well die with a whimper.

Since the early 1980s, this scenario has permeated our most dismal visions of the future: Suddenly, the sky blazes with the radiance of a thousand suns. Millions of lives burn to ash and shadow. Finally, as nuclear firestorms incinerate cities and forests, torrents of smoke ascend into the atmosphere to entomb the planet in billowing, black clouds of ash.

The result is noontime darkness, plummeting temperatures and the eventual death of life on planet Earth.

ContentsThe theory of nuclear winter is essentially one of environmental collateral damage. While a nuclear attack might target a nation's military infrastructure or population centers, the assault could inflict massive harm to Earth's atmosphere.

It's easy to take the air we breathe for granted, but the atmosphere is a vital component of all life on this planet. In fact, scientists believe it co-evolved to its present state along with Earth's unicellular organisms. It protects us from dangerous levels of solar radiation, but also allows the sun to heat our world. Sunlight shines through the atmosphere and warms the planet's surface, which then emits terrestrial radiation that heats the air. If sufficient ash from burning cities and forests ascended into the sky, it could effectively work as an umbrella, shielding large portions of the Earth from the sun. If you diminish the amount of sunlight that makes its way to the surface, then you diminish the resulting atmospheric temperature -- as well as potentially interfere with photosynthesis.

Examples of this scenario have occurred on a smaller scale in recent history. For instance, the 1883 eruption of the Indonesian volcano Krakatoa blasted enough volcanic ash into the atmosphere to lower global temperatures by 2.2 degrees F (1.2 degrees C) for an entire year [source: Maynard]. Decades earlier in 1815, the eruption of Mount Tambora in Indonesia blocked enough sunlight around the globe to cause what came to be known as "the year without summer" [source: Discovery Channel]

That following year, residents in the United States experienced summer snows and temperatures between 5 and 10 degrees F (3 and 6 degrees C) less than average. This drop in temperatures devastated crops and caused hundreds of thousands of deaths -- not counting those who perished in Indonesia. Some archeologists theorize that an even greater cataclysm occurred 65 million years ago when an asteroidcollided with Earth. Called the K-T boundary extinction event, some experts believe this collision may have ejected enough ash and debris into the atmosphere to cause an impact winter. The premise is the same as nuclear winter, only with a different method of generating the atmospheric debris. Some paleontologists suspect such an impact winter brought about the extinction of the dinosaurs.

Natural disasters aren't the only proven temperature changers. At the close of the 1991 Persian Gulf War, Iraqi President Saddam Hussein torched 736 Kuwaiti oil wells. The fires raged for nine months, during which average local air temperatures fell by 18.3 degrees F (10.2 degrees C) [source: McLaren].

As severe as these examples seem, nuclear winter theorists provided a far bleaker forecast should nuclear war erupt between the nuclear superpowers of United States and the then-Soviet Union. In the 1980s theorists predicted decade-long temperate decreases of as much as 72 degrees F (40 degrees C) [source: Perkins]. Such a winter could finish the destruction that nuclear war started, sending the survivors down a chilling path of famine and starvation.

Nuclear Winter and the OzoneSome scientists predict that nuclear winter would be followed by an even harsher spring. They theorize that the sunlight bounced back up from the smoke clouds would heat up nitrogen oxides in the stratosphere. At high temperatures, the nitrogen oxides, which formed due to blast-burned oxygen, would deplete the ozone layer at much higher than normal rates.

In Carl Sagan and Richard Turco's book "A Path Where No Man Thought," the two nuclear winter theorists propose six classes of nuclear winter, which provide a framework for understanding the possible atmospheric consequences of modern warfare.

However, nuclear winter is very much a theory -- and a controversial one at that. Next, we'll look at how the theory has evolved and where it stands today.

In many ways, the nuclear winter debate is similar to global warming debate. In both cases, it's easy to classify one side as alarmist and accuse the other of being in denial. It's also easy to attribute political motivations to either side.

The atmosphere is an incredibly complicated system. When you have 5.5 quadrillion tons (4.99 quadrillion metric tons) of gas and countless local, global, terrestrial and extraterrestrial factors stirring it into motion, it's difficult to understand how it all works. Even advanced computer models lose effectiveness when forecasting weather more than a few days. The use of these models gave birth to the notion of chaos theory and the Butterfly Effect. The smallest change can have enormous consequences, and there's at least a hint of the unpredictable to everything.

During the 1970s, the National Academy of Sciences and the U.S. Office of Technology Assessment deliberated the possible environmental effects of nuclear war, and in 1982, the Swedish Academy of Sciences published "The Atmosphere after a Nuclear War: Twilight at Noon." This report predicted that smoke from burning cities and forests might diminish sunlight -- with dangerous consequences. In 1983, atmospheric scientist Richard Turco and astrochemist Carl Sagan joined three other scientists in publishing "Global Atmospheric Consequences of Nuclear Explosions." This article, known as the TTAPS report (short for the authors' names: Turco, Toon, Ackerman, Pollack and Sagan), generated a great deal of press. The United States and the Soviet Union gave the findings real consideration -- which some attribute to calming trigger fingers during the Cold War.

The TTAPS findings depend on 1980s computer weather models. But today, such technology is far from infallible. While most scientists agree that nuclear war would have some effect on the atmosphere, not everyone agrees on the severity. Author Michael Crichton accused the TTAPS authors of practicing "consensus science," in which speculation, public opinion and politics empower imperfect theories. Crichton argued that while consensus science may sell us something beneficial today, it sets a dangerous precedent for the future.

In 1990, the TTAPS authors published revised findings based on new data. The more moderate results appeased some critics, but there were -- and are -- still dissenting voices. These disagreements come down to four factors, each presenting its share of unknowns or unknowables:

As our understanding of the atmosphere improves, scientists continue to apply the data to the prospect of nuclear war. While it's easy to look at Cold War nuclear scenarios and discount nuclear winter as a threat in the 21st century, recent findings suggest we may be far from safe.

Using modern climate models, scientists Brian Toon and Alan Robock theorize that even a regional nuclear war could cause a marginal nuclear winter for everyone. According to their 2007 findings, if India and Pakistan were to each launch 50 nuclear weapons at each other, the entire globe could experience 10 years of smoke clouds and a three-year temperature drop of approximately 2.25 degrees F (1.25 degrees C) [source: Perkins]. Due in part to this report, the Bulletin of the Atomic Scientists advanced the Doomsday Clock two minutes closer to midnight.

We're not a full century into the nuclear age, but so far we've avoided even regional nuclear war. Will this stalemate hold out? Or will humans eventually get to test nuclear winter theories firsthand?

Sources